As AI products move from experimentation to deployment, teams are becoming more selective about what they integrate into production. Raw model capability still matters, but it is no longer the only factor behind adoption. In real product environments, teams also need API access that is fast enough for interactive experiences, affordable enough for repeated use, and flexible enough to support different reasoning demands across different tasks.

That is why Gemini 3 Flash API is attracting interest from startups, product teams, and developers building practical AI systems. Its appeal is not just about underlying model performance. It is about whether the API can support modern workflows without introducing too much latency, too much operational cost, or too much friction between testing and deployment.

Why Gemini 3 Flash API Matters in Modern AI Workflows

Modern AI workflows are no longer limited to simple text generation. Teams now build assistants, search layers, internal copilots, multimodal tools, workflow automations, and customer-facing AI features that operate under real product constraints. In those environments, the value of an API is shaped by more than output quality alone.

Gemini 3 Flash API matters because it addresses a practical deployment question: how do teams access useful reasoning and multimodal capability through an API that still feels viable for day-to-day product use? For businesses and builders, that question is often more important than a pure model leaderboard comparison.

An API becomes meaningful when it fits how products are actually built, tested, and scaled. That is the context in which Gemini 3 Flash API becomes relevant.

How Gemini 3 Flash API Delivers Speed Without Sacrificing Usability

Speed is not simply an infrastructure detail. In AI products, it directly affects usability. If an API responds too slowly, even strong underlying capabilities can feel harder to use in practice. That matters in chat interfaces, AI copilots, support systems, and embedded productivity features where responsiveness helps determine whether a feature feels natural or frustrating.

Gemini 3 Flash API stands out because speed contributes to product usability, not just benchmark appeal. Fast API response helps preserve interaction flow, reduces friction in repeated calls, and makes it easier for teams to integrate AI into experiences that users rely on every day.

Why Response Speed Matters in User-Facing AI Products

In user-facing environments, response time shapes trust. An AI assistant may be capable of producing useful answers, but if the API layer feels slow in common interactions, users often become less willing to rely on it. For support tools, writing assistants, and workplace copilots, responsiveness is often part of the product itself.

That is why low latency matters so much at the API level. It helps teams build features that feel dependable under normal usage conditions, not just in isolated testing.

Why Low Latency Matters in Multi-Step AI Automation

Low latency becomes even more important in automation workflows. Many AI systems no longer stop at one request and one response. They may involve routing, repeated tool calls, context updates, or multi-step orchestration. In these systems, API delay compounds across the workflow.

For teams building these kinds of products, a fast API is not just convenient. It is a structural advantage, because the overall user experience depends on how efficiently the workflow moves from one step to the next.

How Gemini 3 Flash API Pricing and Cost Shape Adoption Decisions

For many teams, API selection is as much an operational decision as a technical one. An API may provide access to strong underlying capabilities, but if the pricing structure makes experimentation expensive or makes production usage difficult to predict, adoption can slow down quickly. This is why Gemini 3 Flash API pricing and Gemini 3 Flash API cost matter far beyond procurement discussions.

The more a product depends on repeated inference, multimodal requests, iterative prompt refinement, or long-running workflows, the more important API economics become. Teams building sustainable AI products do not only need high-quality outputs. They also need API access that supports a workable path from evaluation to deployment and then from deployment to scale.

Why Affordable Access Matters for Teams Testing New Workflows

Experimentation becomes harder when every iteration carries too much cost. Startups validating product ideas, developers tuning prompts, and teams testing tool-using workflows all need enough room to explore before settling on a stable implementation. If API access is too expensive during this stage, teams often limit experimentation too early.

That is one reason affordable access matters. It supports learning, iteration, and implementation quality before a workflow is locked into production. Platforms such as Gemini 3 Flash API on Kie.ai position affordability as part of production readiness, which is especially relevant for teams trying to reduce friction between prototype work and deployment planning.

How Cost Efficiency Supports Production-Scale AI Workflows

After launch, API economics become even more important. Once an AI feature enters daily use, cost moves from being a planning concern to being a design constraint. Support assistants, AI search layers, automated internal tools, and personalized content systems may all generate large request volumes over time.

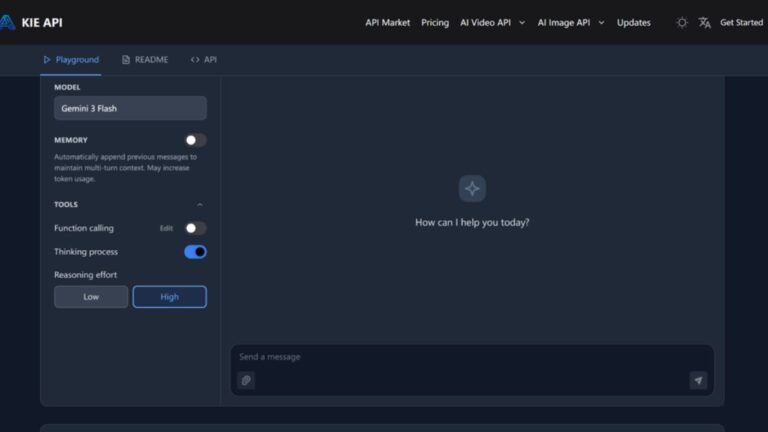

In that context, cost efficiency supports more than budget control. It affects how often AI features can be used, how broadly they can be deployed, and how comfortably teams can keep improving them. Kie.ai presents its Gemini 3 Flash API access around low-latency inference, configurable thinking levels, structured output, tool use, and automatic context handling, which makes the API easier to evaluate in production terms rather than as a narrow test endpoint.

How Gemini 3 Flash API Supports Thinking in Real-World Applications

Another reason Gemini 3 Flash API is useful in modern workflows is that not every task requires the same level of reasoning. In practical systems, teams often need an API that can support a range of reasoning demands instead of treating every request as equally complex.

This is where Gemini 3 Flash thinking becomes relevant as an implementation concept. Rather than assuming that maximum reasoning should be applied everywhere, teams can think in terms of fit: which workflows need fast lightweight handling, and which ones benefit from deeper reasoning through the API?

That distinction matters in production because reasoning depth affects latency, cost, and output style at the same time.

Where Lightweight Thinking Works Best

Lightweight reasoning works well in tasks such as classification, extraction, summarization, FAQ handling, and routing. These workflows are often high-volume and do not always benefit from deeper deliberation. In many cases, what matters most is speed, consistency, and adequate output quality.

That makes lightweight thinking particularly useful in customer support pipelines, internal routing systems, and productivity features where efficiency matters more than extended inference.

Where Deeper Thinking Adds More Value

Deeper reasoning becomes more useful in tool-using agents, multi-step planning, coding assistance, structured business logic, and document-heavy problem solving. In these cases, the value of the API depends not just on returning text quickly, but on supporting more thoughtful and context-aware output when the workflow requires it.

The important point is not that every use case should maximize reasoning. It is that a practical API should let teams align reasoning depth with real workflow needs.

Where Gemini 3 Flash API Fits Best in Modern AI Product Stacks

The most practical way to evaluate Gemini 3 Flash API is to look at where it fits inside actual product stacks. Its value becomes clearer in applications that combine fast response expectations with meaningful reasoning requirements and repeated production use.

Gemini 3 Flash API for Interactive Document and Visual Reasoning

One strong fit is document and visual reasoning. Enterprise assistants, internal knowledge tools, and research workflows often need to process PDFs, screenshots, diagrams, and mixed-format inputs while still returning useful answers quickly.

In these cases, the API is valuable because it helps teams connect multimodal understanding with real product responsiveness. That is much more useful than treating multimodal capability as a standalone feature.

Gemini 3 Flash API for Real-Time Agentic Assistance and Tool Use

Another good fit is real-time agentic assistance. Teams building systems that call tools, retrieve structured data, or coordinate actions across multiple steps need API access that is both responsive and reliable enough to support repeated orchestration.

This is where fast response, structured output, and tool-friendly behavior become especially important. For agentic systems, the API layer must help the workflow move efficiently rather than becoming the bottleneck.

Gemini 3 Flash API for Rapid Prototyping and Design-to-Code Workflows

Gemini 3 Flash API also fits rapid prototyping environments. Product teams increasingly move between text specifications, UI references, screenshots, design notes, and implementation tasks. An API that can support these transitions quickly can help reduce iteration time across product and engineering workflows.

That kind of flexibility is useful for teams that want more than a single-purpose text generation endpoint.

Gemini 3 Flash API for Personalized Content and Experience Workflows

Personalized content systems are another practical match. Marketing workflows, adaptive user messaging, experience personalization, and high-frequency content generation all place pressure on cost and speed at the same time.

In those environments, Gemini 3 Flash API becomes relevant because the API must support repeated production use under real operating constraints, not just occasional experimentation.

What Teams Should Evaluate Before Choosing Gemini 3 Flash API

Before adopting any AI API, teams should evaluate more than capability claims. They should ask whether the workflow is latency-sensitive, whether multimodal input is a real requirement, whether reasoning depth needs to vary across tasks, and how much API cost will matter after usage grows.

They should also consider whether the API fits their broader product architecture. That includes how easily it supports structured outputs, repeated interactions, automation layers, and production reliability expectations.

These are the questions that turn API selection into a business and engineering decision rather than a simple feature comparison.

Why Gemini 3 Flash API Is a Practical Option for Scalable AI Workflows

The appeal of Gemini 3 Flash API comes from balance. Teams do not just need advanced underlying capabilities. They need API access that makes those capabilities usable under real product conditions. That means speed that supports interaction, cost that supports repeated deployment, and reasoning flexibility that supports different workflow types.

As more AI products move from pilot projects to real infrastructure, that balance matters more. Gemini Flash 3 API stands out because it aligns with how modern AI systems are actually built: through APIs that must be responsive, scalable, and operationally realistic from testing through production.