For any creative lead or performance marketer using generative tools, the “character drift” problem is the primary barrier to professional adoption. It is the phenomenon where a subject’s facial structure, hair texture, or clothing details subtly shift between generations, rendering a sequence of images or a video transition useless for coherent storytelling. When you are building a brand mascot or a consistent protagonist for a campaign, “close enough” isn’t an acceptable standard.

Solving this requires moving beyond simple text prompting and into a structured workflow that treats AI models as precise instruments rather than magic boxes. To achieve stability across varied outputs, teams are increasingly turning to specific model architectures and platforms that prioritize subject preservation.

The Technical Reality of Character Drift

AI models do not possess a “memory” of your character in the way a human artist does. They operate on probabilities within a latent space. When you ask for a “blonde woman in a blue suit,” the model samples from a massive distribution of possible blonde women and blue suits. Each generation is a new roll of the dice unless you provide a mathematical anchor.

Character drift typically happens because of prompt influence. If your second prompt adds “running in the rain,” the model might adjust the facial features to appear more distressed or change the hair color because “rainy” training data often features darker, saturated tones. To combat this, creators must decouple the subject’s identity from the environmental variables.

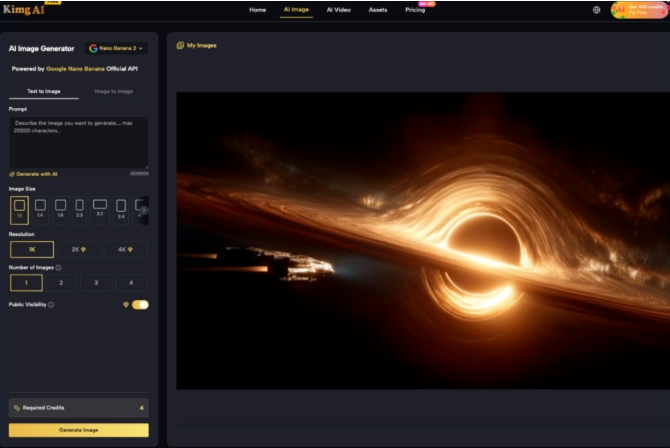

Stabilizing the Core with Nano Banana Pro

The first step in a professional workflow is selecting a base model capable of high-fidelity subject retention. This is where Nano Banana Pro enters the production pipeline. Unlike standard models that might prioritize artistic flair over structural accuracy, this architecture is designed for the high-resolution, precise rendering required for commercial assets.

When using this model, the goal is to establish a “source of truth.” This usually involves generating a neutral, high-quality portrait of the character. Once a specific seed and facial structure are locked in, that output serves as the reference for all subsequent work. The strength of this approach lies in the model’s ability to interpret specific descriptors—like the exact bridge of a nose or a unique eye shape—with repeatable accuracy across different lighting conditions.

Implementing a Reference-First Workflow

Professional teams rarely rely on a single prompt. Instead, they use a tiered approach to maintain identity. This starts with the Nano Banana Pro AI generator to create the foundational “K-level” images that define the character’s geometry.

- Seed Hardcoding: Once a character is generated that meets the visual requirements, the seed number must be recorded. While seeds are not universal across different models, they are essential for iterating within the same environment. Small adjustments to the prompt (e.g., changing “smiling” to “serious”) while keeping the seed the same can help maintain the underlying facial skeleton.

- Image-to-Image Influence: This is the most effective way to prevent drift. By uploading a base character image as a structural reference, you force the AI to respect the existing contours. This reduces the model’s creative freedom regarding the character’s identity while allowing it to change the background or pose.

- Inpainting for Detail Correction: Even with high-end models, minor drift is inevitable. A character might lose a specific mole or have their earring style changed. Inpainting allows a creator to mask only the drifted area and re-generate it using a more specific prompt, effectively “pinning” the identity back to the original intent.

The Role of Scene Identity

Character consistency is only half the battle; scene identity is the other. If your character moves from a modern office to a futuristic city, the lighting, color grading, and texture density must remain cohesive.

Using Nano Banana Pro in an editorial workflow allows for “style-locking.” By defining the aesthetic parameters—such as lens type (e.g., 35mm), lighting (e.g., Rembrandt lighting), and film grain—in a global style block, you ensure that the environment feels like it belongs to the same universe as the character, even as the location changes.

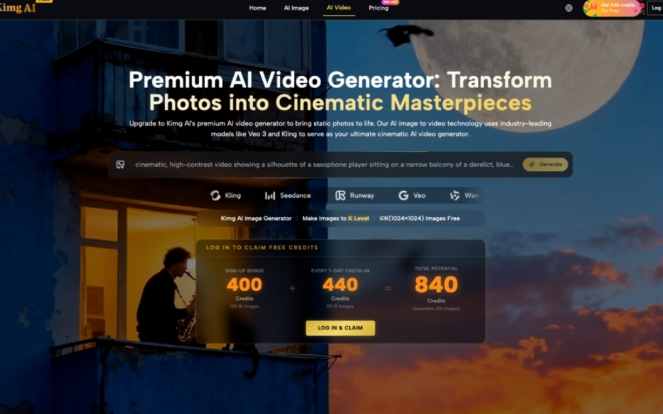

Bridging the Gap from Image to Video

The challenge of drift intensifies when moving from static images to video. In a video generation context, the model must maintain identity across 24 to 30 frames per second. Most failures in AI video occur when the model “hallucinates” new features during movement.

A common workflow involves taking a high-resolution frame generated by Nano Banana Pro AI and using it as the first frame (i-frame) for a video model like Kling or Veo 3. This provides the video engine with a high-fidelity starting point. However, it is important to manage expectations here. Current video models still struggle with “identity bleed” during high-motion sequences. If a character turns their head 180 degrees, the model may struggle to accurately reconstruct the back of the head if it wasn’t explicitly defined in the initial generation.

Limitations in Current Identity Tech

It is a mistake to claim that AI can currently achieve 100% perfect identity stability in every scenario. There are two significant areas where the technology still faces friction:

- Extreme Angles and Perspective: If you have a character reference from the front, generating that same character from a bird’s-eye view or a sharp low angle often results in visible drift. The model begins to guess features, and the “anchor” weakens.

- Complex Interactions: When a character interacts with an object—like putting on a pair of glasses or holding a specific branded product—the model often prioritizes the physics of the interaction over the stability of the face. This often requires multiple “passes” or manual compositing in post-production.

Scaling Production on Kimg AI

For teams managing multiple projects, the Kimg AI platform provides the necessary infrastructure to handle these complex workflows. The availability of diverse models, from Nano Banana to Flux and Google’s latest offerings, allows creators to choose the right tool for each stage of the “Identity Anchor” process.

The platform’s ability to upscale images to K-level resolution is particularly vital for character consistency. Drift is often most noticeable in low-resolution outputs where features are blurred. By upscaling the foundational character image early in the process, you provide a sharper, more defined reference point for the AI to follow in subsequent iterations. This reduces the “fuzzy logic” that leads to structural shifts.

Advanced Tactics: Negative Prompting and Weighting

To further refine stability, experienced operators use negative prompts to “fence in” the character’s look. If a model consistently tries to give a character freckles that shouldn’t be there, “freckles” or “skin blemishes” must be added to the negative prompt across the entire campaign.

Weighting also plays a role. In many advanced interfaces, you can tell the model to prioritize the “image prompt” (the character reference) over the “text prompt” (the action). By setting a high weight on the image reference, you effectively tell the AI, “Do not change the face, no matter what the text says.” This is the core of the identity anchor technique.

The Workflow Checklist for Creators

To minimize character drift in your next project, follow this production-ready sequence:

- Define the Persona: Generate 10-20 variations using Nano Banana Pro until you find the “hero” image.

- Extract Metadata: Save the seed, the exact prompt, and the model version.

- Create a Reference Sheet: Use the AI Image Maker to generate the character from a few basic angles (front, 3/4 view, profile) to establish a 3D mental map for the AI.

- Use Consistent Style Anchors: Keep your lighting and camera prompts identical across all shots in a scene.

- Iterate with Image-to-Image: Never start a new frame from a blank text box; always use your hero image or the previous frame as a structural guide.

Conclusion: The Future of Subject Permanence

We are moving away from the era of “random” AI generation and into the era of directed AI production. Tools like Nano Banana Pro AI are not just about making pretty pictures; they are about providing the control required for serious narrative work.

While there is still an “uncertainty gap” in how models handle physics and extreme perspective shifts, the discipline of using identity anchors allows teams to produce content that was impossible just a year ago. By treating the AI as a collaborator that requires clear boundaries and consistent references, creators can finally overcome the drift and build truly cohesive visual worlds. The key is to stop prompting for results and start engineering for consistency.